What administrators should know about OpenShift?

OpenShift Container Platform is a platform for developing and running containerized applications. It is designed to allow applications and the data centers that support them to expand from just a few machines and applications to thousands of machines that serve millions of clients.

With its foundation in Kubernetes, OpenShift Container Platform incorporates the same technology that serves as the engine for massive telecommunications, banking, public services, streaming video, gaming and other applications. Its implementation in open Red Hat technologies lets you extend your containerized applications beyond a single cloud to on-premise, hybrid and multi-cloud environments.

OpenShift Architecture

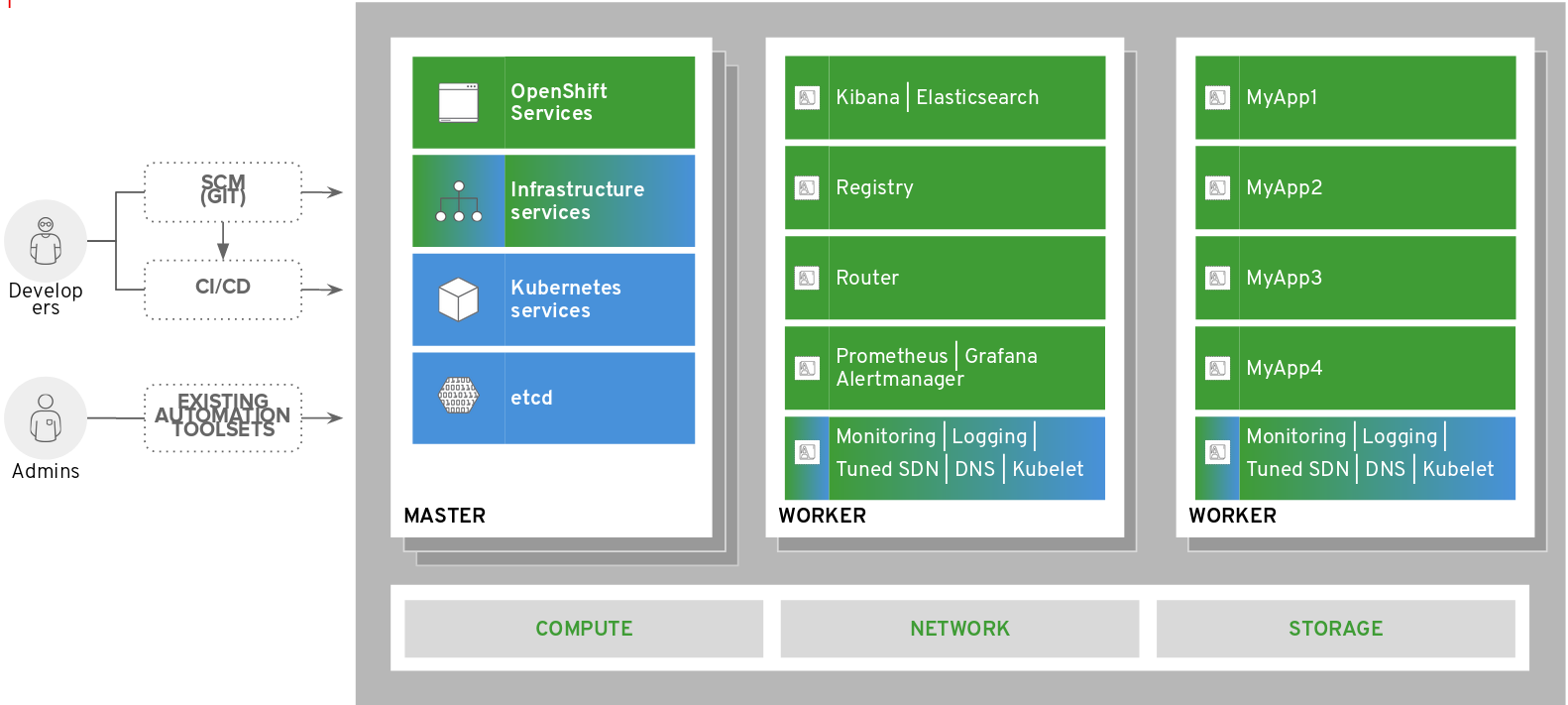

OpenShift Container Platform cluster consists of two types of machines: Master nodes and Worker nodes. Master nodes are the control plane of the cluster and host cluster management components: Etcd cluster configuration database, Kubernetes API server, OpenShift Web Console, Operator Lifecycle Manager, etc… Worker nodes host all OpenShift containerized infrastructure services as well as your applications. This logical architecture could be translated to following OpenShift Container Platform deployment reference architecture:

Red Hat OpenShift Container Platform cluster consists of following instances (physical or virtual):

- Master nodes – cluster control planes. For High Availability an odd number of master instances is required (typically three).

- (Optional) Infrastructure worker nodes – dedicated worker nodes to host OpenShift infrastructure components: Logging, Monitoring, Container Images Registry, Ingress Routers, etc…

- Application worker nodes – worker nodes that hosts your applications

- (Optional) Container Storage nodes – dedicated worker nodes that host OpenShift Container Storage based on Ceph which provides persistent storage for containers. Minimum three nodes are required.

Installation & Upgrade

In OpenShift Container Platform 4, if you have an account with the right permissions, you can deploy a production cluster in supported public and private clouds by running a single command and providing a few values. You can also customize your cloud installation or install your cluster in your data center if you use a supported platform.

For clusters that use Red Hat Enterprise Linux CoreOS for all machines, updating, or upgrading, OpenShift Container Platform is a simple, highly-automated process. Because OpenShift Container Platform completely controls the systems and services that run on each machine, including the operating system itself, from a central control plane, upgrades are designed to become automatic events. If your cluster contains RHEL worker machines, the control plane benefits from the streamlined update process, but you must perform more tasks to upgrade the RHEL machines.

Red Hat Enterprise Linux CoreOS

OpenShift Container Platform uses Red Hat Enterprise Linux CoreOS (RHCOS), a container-oriented operating system that combines some of the best features and functions of the CoreOS and Red Hat Atomic Host operating systems. RHCOS is specifically designed for running containerized applications from OpenShift Container Platform and works with new tools to provide fast installation, Operator-based management, and simplified upgrades.

RHCOS includes:

- Based on RHEL: The underlying operating system consists primarily of RHEL components. The same quality, security, and control measures that support RHEL also support RHCOS. For example, RHCOS software is in RPM packages, and each RHCOS system starts up with a RHEL kernel and a set of services that are managed by the systemd init system.

- Controlled immutability: Although it contains RHEL components, RHCOS is designed to be managed more tightly than a default RHEL installation. Management is performed remotely from the OpenShift Container Platform cluster. When you set up your RHCOS machines, you can modify only a few system settings. This controlled immutability allows OpenShift Container Platform to store the latest state of RHCOS systems in the cluster so it is always able to create additional machines and perform updates based on the latest RHCOS configurations.

- CRI-O container runtime: Although RHCOS contains features for running the OCI- and libcontainer-formatted containers that Docker requires, it incorporates the CRI-O container engine instead of the Docker container engine. By focusing on features needed by Kubernetes platforms, such as OpenShift Container Platform, CRI-O can offer specific compatibility with different Kubernetes versions. CRI-O also offers a smaller footprint and reduced attack surface than is possible with container engines that offer a larger feature set. At the moment, CRI-O is only available as a container engine within OpenShift Container Platform clusters.

- Set of container tools: For tasks such as building, copying, and otherwise managing containers, RHCOS replaces the Docker CLI tool with a compatible set of container tools. The podman CLI tool supports many container runtime features, such as running, starting, stopping, listing, and removing containers and container images. The skopeo CLI tool can copy, authenticate, and sign images. You can use the crictl CLI tool to work with containers and pods from the CRI-O container engine.

- rpm-ostree upgrades: RHCOS features transactional upgrades using the rpm-ostree system. Updates are delivered by means of container images and are part of the OpenShift Container Platform update process. When deployed, the container image is pulled, extracted, and written to disk, then the bootloader is modified to boot into the new version. The machine will reboot into the update in a rolling manner to ensure cluster capacity is minimally impacted.

- Updated through MachineConfigOperator: In OpenShift Container Platform, the Machine Config Operator handles operating system upgrades. Instead of upgrading individual packages, as is done with yum upgrades, rpm-ostree delivers upgrades of the OS as an atomic unit. The new OS deployment is staged during upgrades and goes into effect on the next reboot. If something goes wrong with the upgrade, a single rollback and reboot returns the system to the previous state. RHCOS upgrades in OpenShift Container Platform are performed during cluster updates.

Networking

OpenShift ensures that Pods are able to network with each other, and allocates each Pod an IP address from an internal network. This ensures all containers within the Pod behave as if they were on the same host. Giving each Pod its own IP address means that Pods can be treated like physical hosts or virtual machines in terms of port allocation, networking, naming, service discovery, load balancing, application configuration, and migration.

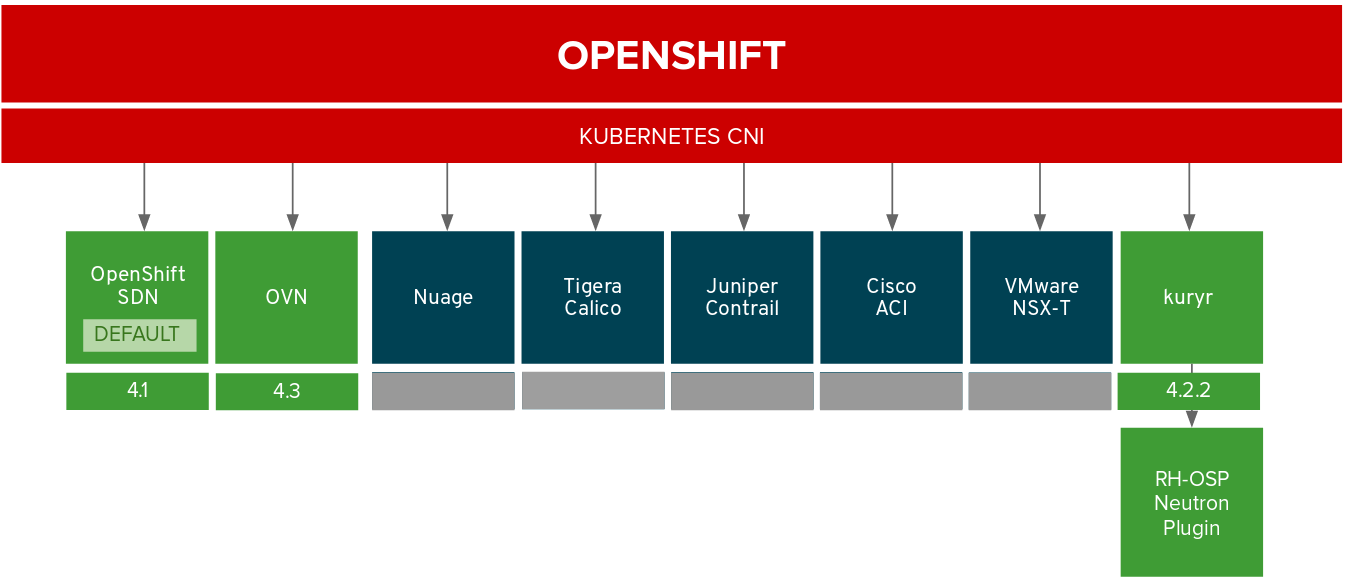

Internal OpenShift networking is based on Kubernetes CNI interface. Currently, the default OpenShift CNI plugin is OpenShift SDN (network-policy), which configures an overlay network using Open vSwitch. OVN is a new generation of OpenShift SDN plugin and will become default in upcoming OpenShift releases. OpenShift supports also a number of 3rd party CNI plugins as well as kuryr plugin for OpenStack Neutron integration.

In addition OpenShift Container Platform contains the Multus CNI plug-in to allow chaining of CNI plug-ins. During cluster installation, you configure your default Pod network. The default network handles all ordinary network traffic for the cluster. You can define an additional network based on the available CNI plug-ins and attach one or more of these networks to your Pods. You can define more than one additional network for your cluster, depending on your needs. This gives you flexibility when you configure Pods that deliver network functionality, such as switching or routing.

Monitoring

OpenShift Container Platform includes a pre-configured, pre-installed, and self-updating monitoring stack that is based on the Prometheus open source project. It provides monitoring of cluster components and includes a set of alerts to immediately notify the cluster administrator about any occurring problems and a set of Grafana dashboards.

You can use OpenShift Monitoring for your own services in addition to monitoring the cluster. This way, you do not need to use an additional monitoring solution. This helps keeping monitoring centralized. Additionally, you can extend the access to the metrics of your services beyond cluster administrators. This enables developers and arbitrary users to access these metrics.

Log Aggregation

As an OpenShift Container Platform cluster administrator, you can deploy cluster logging to aggregate all the logs from your OpenShift Container Platform cluster, such as node system logs, application container logs, and so forth.

The cluster logging components are based upon Elasticsearch, Fluentd, and Kibana (EFK). The collector, Fluentd, is deployed to each node in the OpenShift Container Platform cluster. It collects all node and container logs and writes them to Elasticsearch (ES). Kibana is the centralized, web UI where users and administrators can create rich visualizations and dashboards with the aggregated data.

Persistent Storage

OpenShift Container Platform uses the Kubernetes persistent volume (PV) framework to allow cluster administrators to provision persistent storage for a cluster. Developers can use persistent volume claims (PVCs) to request PV resources without having specific knowledge of the underlying storage infrastructure.

OpenShift supports multiple types of storage:

A PersistentVolume can be mounted on a node in any way supported by the storage provider. Providers have different capabilities and each PV’s access modes are set to the specific modes supported by that particular volume.

Storage Access Modes supported by storage providers determines scalability of stateful pods deployed across OpenShift cluster nodes. For example RWO volume can be mounted only to a single OpenShift cluster node at the time, and so must pods consuming this persistent volume. RWX volumes can be mounted to multiple OpenShift cluster nodes at the same time, and therefore pods mounted to these volumes could be distributed across these nodes.

Resources Quotas and Limits

OpenShift provides two types of resource quotas: project and multi-project resource quotas.

A resource quota, defined by a ResourceQuota object, provides constraints that limit aggregate resource consumption per project. It can limit the quantity of objects that can be created in a project by type, as well as the total amount of compute resources and storage that may be consumed by resources in that project.

A multi-project quota, defined by a ClusterResourceQuota object, allows quotas to be shared across multiple projects. Resources used in each selected project are aggregated and that aggregate is used to limit resources across all the selected projects.

Security

Openshift Container Platform provides set of built-in security features including:

- Host & Runtime security

RHCOS is specifically designed for running containerized applications. The same quality, security, and control measures that support RHEL also support RHCOS including SELinux, Seccomp, cGroups and Namespaces.

- User Identity and Access Management across all components of the Platform

For users to interact with OpenShift Container Platform, they must first authenticate to the cluster. The authentication layer identifies the user associated with requests to the OpenShift Container Platform API. The authorization layer then uses information about the requesting user to determine if the request is allowed.

As a cluster administrator, you can configure authentication for OpenShift Container Platform.

- Role-Based Access Controls

Role-based access control (RBAC) objects determine whether a user is allowed to perform a given action within a project. Cluster administrators can use the cluster roles and bindings to control who has various access levels to the OpenShift Container Platform platform itself and all projects. Developers can use local roles and bindings to control who has access to their projects. Note that authorization is a separate step from authentication, which is more about determining the identity of who is taking the action.

There are two levels of RBAC roles and bindings that control authorization:

Cluster RBAC: roles and bindings that are applicable across all projects. Cluster roles exist cluster-wide, and cluster role bindings can reference only cluster roles.

Local RBAC: roles and bindings that are scoped to a given project. While local roles exist only in a single project, local role bindings can reference both cluster and local roles.

- Security context constraints for Pods

Similar to the way that RBAC resources control user access, administrators can use Security Context Constraints (SCCs) to control permissions for pods. These permissions include actions that a pod, a collection of containers, can perform and what resources it can access. You can use SCCs to define a set of conditions that a pod must run with in order to be accepted into the system.

SCCs allow an administrator to control: whether a pod can run privileged containers, Seccomp capabilities that a container can request, use of host directories as volumes, SELinux context of the container, container user ID, use of host namespaces and networking, allocation of an FSGroup that owns the pods volumes, configuration of allowable supplemental groups, whether a container requires the use of a read only root file system, usage of volume types, configuration of allowable Seccomp profiles.

- Project namespaces isolation

A project allows a community of users to organize and manage their content in isolation from other communities. Each project could have separate RBAC settings, subnet, hardware resources allocation, cluster nodes binding or secret management.

- Integrated SDN – Network Policies is default

In OpenShift Container Platform 4, OpenShift SDN supports using NetworkPolicy in its default network isolation mode. In addition, there is also a way to control egress traffic IPs as well as egress firewall to limit access to networks outside of the OpenShift cluster. Network Policy allows creating microsegmentation between deployed application services on the cluster.

- Integrated & extensible secrets management

The Secret object type provides a mechanism to hold sensitive information such as passwords, OpenShift Container Platform client configuration files, private source repository credentials, and so on. Secrets decouple sensitive content from the pods. You can mount secrets into Containers using a volume plug-in or the system can use secrets to perform actions on behalf of a pod.

Operators

Operators are pieces of software that ease the operational complexity of running Kubernetes native applications or services. They are watching over a Kubernetes environment (such as OpenShift Container Platform) and using its current state to make decisions in real time. Advanced Operators are designed to handle not only installation but also upgrades, or react to failures automatically.

In OpenShift Container Platform 4, the Operator Lifecycle Manager (OLM) helps users install, update, and manage the lifecycle of all Operators and their associated services running across their clusters. It is part of the Operator Framework, an open source toolkit designed to manage Kubernetes native applications (Operators) in an effective, automated, and scalable way.

OpenShift Container Platform 4 has operator first architecture which means all core components as well as optional components are managed by Operators.

The OperatorHub is available via the OpenShift Container Platform web console and is the interface that cluster administrators use to discover and install Operators. With one click, an Operator can be pulled from their off-cluster source, installed and subscribed on the cluster, and made ready for engineering teams to self-service manage the product across deployment environments using the Operator Lifecycle Manager.

10:39 AM, May 06

Author:

Principal Solutions Architect

Jarosław Stakun

Jaroslaw works as an Principal Solutions Architect at Red Hat and is responsible for delivery and solution selling based on Red Hat Openshift Container Platform and Red Hat Application Services in the region of Central and Eastern Europe.